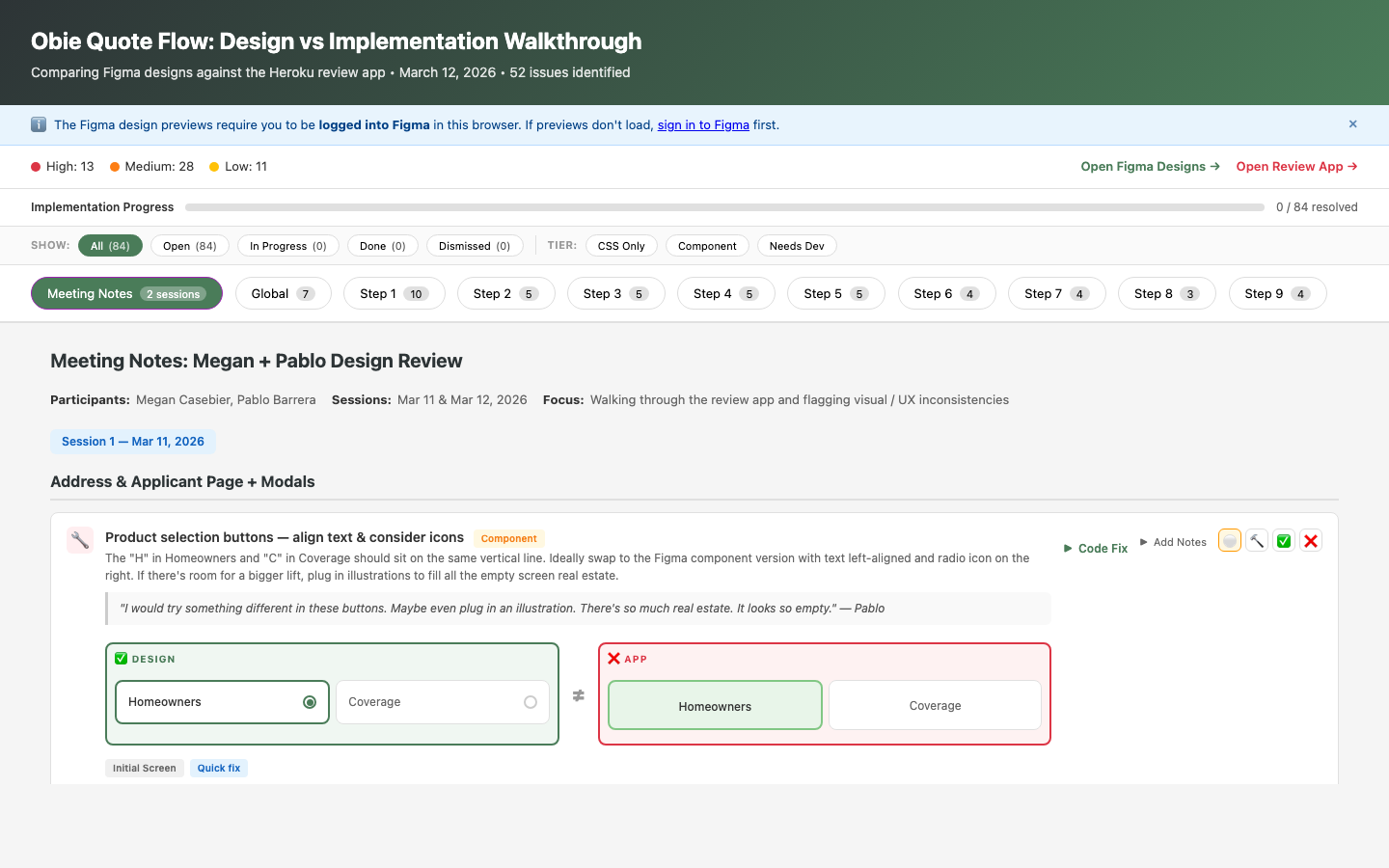

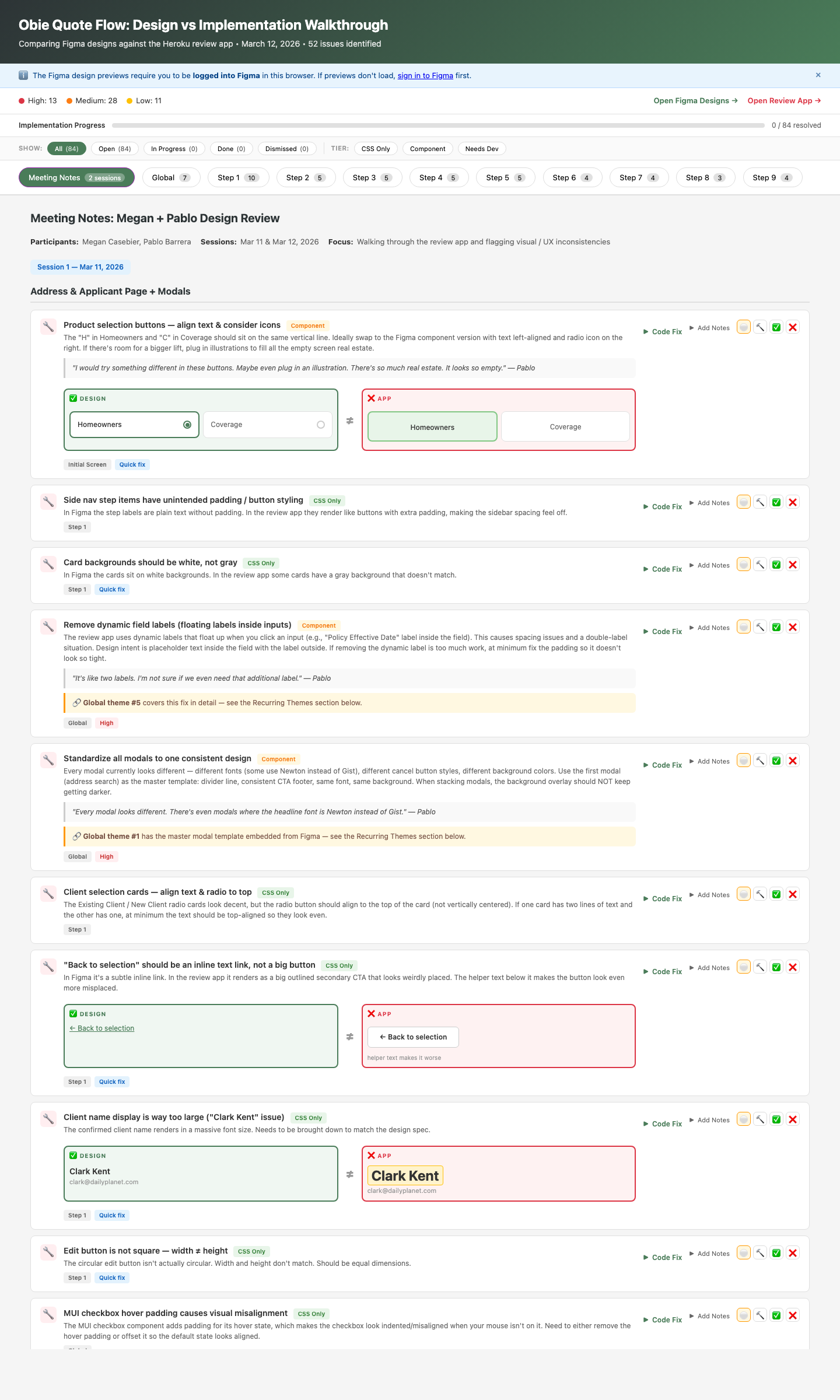

I built a tool that shipped 52 design fixes before launch

Our homeowners product was about to launch with dozens of design-to-code gaps. I recorded a design review with my UI designer, then built a tool that integrated our Figma designs and the live review app — and had AI analyze every screen to find inconsistencies we’d missed, identify systemic patterns, and generate fix-ready documentation that engineering could self-serve.